In this post, Volume Tiled Forward Shading rendering is described. Volume Tiled Forward Shading is based on Tiled and Clustered Forward Shading described by Ola Olsson et. al. [13][20]. Similar to Clustered Shading, Volume Tiled Forward Shading builds a 3D grid of volume tiles (clusters) and assigns the lights in the scene to the volumes tiles. Only the lights that are intersecting with the volume tile for the current pixel need to be considered during shading. By sorting the lights into volume tiles, the performance of the shading stage can be greatly improved. By building a Bounding Volume Hierarchy (BVH) over the lights in the scene, the performance of the light assignment to tiles phase can also be improved. The Volume Tiled Forward Shading technique combined with the BVH optimization allows for millions of light sources to be active in the scene.

Category Archives: General Purpose GPU Programming

Forward vs Deferred vs Forward+ Rendering with DirectX 11

In this article, I will analyze and compare three rendering algorithms:

- Forward Rendering

- Deferred Shading

- Forward+ (Tiled Forward Rendering)

CUDA Case Study – N-Body Simulation

In this post, I will analyze the CUDA implementation of the N-Body simulation. The implementation that I will be using as a reference for this article is provided with the CUDA GPU Computing SDK 10.2. The source code for this implementation is available in the “%NVCUDASAMPLES_ROOT%\5_Simulations\nbody” in the GPU Computing SDK 10.2 samples base folder.

I assume the reader has a good understanding of the CUDA programming API.

Introduction to OpenCL

In this article I will provide a brief introduction to OpenCL. OpenCL is a open standard for general purpose parallel programming across CPUs, GPUs, and other programmable parallel devices. I assume that the reader is familiar with the C/C++ programming languages. I will use Microsoft Visual Studio 2008 to show how you can setup a project that is compiled with the OpenCL API.

Optimizing CUDA Applications

OpenGL Interoperability with CUDA

In this article I will discuss how you can use OpenGL textures and buffers in a CUDA kernel. I will demonstrate a simple post-process effect that can be applied to off-screen textures and then rendered to the screen using a full-screen quad. I will assume the reader has some basic knowledge of C/C++ programming, OpenGL, and CUDA. If you lack OpenGL knowledge, you can refer to my previous article titled Introduction to OpenGL or if you have never done anything with CUDA, you can follow my previous article titled Introduction to CUDA.

CUDA Memory Model

In this article, I will introduce the different types of memory your CUDA program has access to. I will talk about the pros and cons for using each type of memory and I will also introduce a method to maximize your performance by taking advantage of the different kinds of memory.

I will assume that the reader already knows how to setup a project in Microsoft Visual Studio that takes advantage of the CUDA programming API. If you don’t know how to setup a project in Visual Studio that uses CUDA, I recommend you follow my previous article titled [Introduction to CUDA]

Continue reading

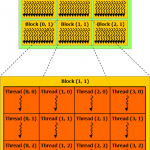

CUDA Thread Execution Model

Introduction to CUDA using Visual Studio 2008

In this article, I will give a brief introduction to using NVIDIA’s CUDA programming API to perform General Purpose Graphics Processing Unit Programming (or just GPGPU Programming). I will also show how to setup a project in Visual Studio that uses the CUDA runtime API to create a simple CUDA program.

Continue reading