Optimizing CUDA Applications

2

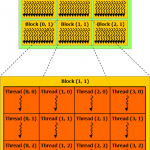

In this article I will discuss a few of the best practices items as described in the “CUDA C Best Practices Guide”. This guide mentions about 40 best practices over more than 70 pages of documentation. This might be a bit more information than the average casual programmer will care to understand. In this article, I want to focus on what I feel are the most important best practices that will result in a direct performance increase to your CUDA application. If you are not familiar with CUDA yet, you may want to refer to my previous articles titled Introduction to CUDA, CUDA Thread Execution, and CUDA memory.