In this tutorial, you will be introduced to several classes that will help you to create a robust and flexible framework for building DirectX 12 applications. Some of the problems that are solved with the classes introduced in this lesson are managing CPU descriptors, copying CPU descriptors to GPU visible descriptor heaps, managing resource state across multiple threads, and uploading dynamic buffer data to the GPU. To automatically manage the state and descriptors for resources, a custom command list class is also provided.

Contents

- 1 Introduction

- 2 Upload Buffer

- 2.1 UploadBuffer Class

- 2.1.1 UploadBuffer Header

- 2.1.2 UploadBuffer Preamble

- 2.1.3 UploadBuffer::UploadBuffer

- 2.1.4 UploadBuffer::Allocate

- 2.1.5 UploadBuffer::RequestPage

- 2.1.6 UploadBuffer::Reset

- 2.1.7 UploadBuffer::Page::Page

- 2.1.8 UploadBuffer::Page::~Page

- 2.1.9 UploadBuffer::Page::HasSpace

- 2.1.10 UploadBuffer::Page::Allocate

- 2.1.11 UploadBuffer::Page::Reset

- 2.1 UploadBuffer Class

- 3 Descriptor Allocator

- 3.1 DescriptorAllocator Class

- 3.2 DescriptorAllocatorPage Class

- 3.2.1 DescriptorAllocatorPage Header

- 3.2.2 DescriptorAllocatorPage Preamble

- 3.2.3 DescriptorAllocatorPage::DescriptorAllocatorPage

- 3.2.4 DescriptorAllocatorPage::GetHeapType

- 3.2.5 DescriptorAllocatorPage::NumFreeHandles

- 3.2.6 DescriptorAllocatorPage::HasSpace

- 3.2.7 DescriptorAllocatorPage::AddNewBlock

- 3.2.8 DescriptorAllocatorPage::Allocate

- 3.2.9 DescriptorAllocatorPage::ComputeOffset

- 3.2.10 DescriptorAllocatorPage::Free

- 3.2.11 DescriptorAllocatorPage::FreeBlock

- 3.2.12 DescriptorAllocatorPage::ReleaseStaleDescriptors

- 3.3 DescriptorAllocation Class

- 3.3.1 DescriptorAllocation Header

- 3.3.2 DescriptorAllocation Preamble

- 3.3.3 DescriptorAllocation Default Constructor

- 3.3.4 DescriptorAllocation Parameratized Constructor

- 3.3.5 DescriptorAllocation Destructor

- 3.3.6 DescriptorAllocation Move Constructor

- 3.3.7 DescriptorAllocation Move Assignment

- 3.3.8 DescriptorAllocation::Free

- 3.3.9 DescriptorAllocation::IsNull

- 3.3.10 DescriptorAllocation::GetDescriptorHandle

- 3.3.11 DescriptorAllocation::GetNumHandles

- 3.3.12 DescriptorAllocation::GetDescriptorAllocatorPage

- 4 Dynamic Descriptor Heap

- 4.1 DynamicDescriptorHeap Class

- 4.1.1 DynamicDescriptorHeap Header

- 4.1.2 DynamicDescriptorHeap Preamble

- 4.1.3 DynamicDescriptorHeap::DynamicDescriptorHeap

- 4.1.4 DynamicDescriptorHeap::ParseRootSignature

- 4.1.5 DynamicDescriptorHeap::StageDescriptors

- 4.1.6 DynamicDescriptorHeap::ComputeStaleDescriptorCount

- 4.1.7 DynamicDescriptorHeap::RequestDescriptorHeap

- 4.1.8 DynamicDescriptorHeap::CreateDescriptorHeap

- 4.1.9 DynamicDescriptorHeap::CommitStagedDescriptors

- 4.1.10 DynamicDescriptorHeap::CommitStagedDescriptorsForDraw

- 4.1.11 DynamicDescriptorHeap::CommitStagedDescriptorsForDispatch

- 4.1.12 DynamicDescriptorHeap::CopyDescriptor

- 4.1.13 DynamicDescriptorHeap::Reset

- 4.1 DynamicDescriptorHeap Class

- 5 Resource State Tracking

- 5.1 ResourceStateTracker Class

- 5.1.1 ResourceStateTracker Header

- 5.1.2 ResourceStateTracker Preamble

- 5.1.3 ResourceStateTracker::ResourceBarrier

- 5.1.4 ResourceStateTracker::TransitionResource

- 5.1.5 ResourceStateTracker::UAVBarrier

- 5.1.6 ResourceStateTracker::AliasBarrier

- 5.1.7 ResourceStateTracker::FlushResourceBarriers

- 5.1.8 ResourceStateTracker::FlushPendingResourceBarriers

- 5.1.9 ResourceStateTracker::CommitFinalResourceStates

- 5.1.10 ResourceStateTracker::Reset

- 5.1.11 ResourceStateTracker::Lock

- 5.1.12 ResourceStateTracker::Unlock

- 5.1.13 ResourceStateTracker::AddGlobalResourceState

- 5.1.14 ResourceStateTracker::RemoveGlobalResourceState

- 5.1 ResourceStateTracker Class

- 6 Custom Command List

- 7 Conclusion

- 8 Download the Source

- 9 References

The design of these classes prioritizes convenience for the graphics programmer when creating demos (for research purposes ) but may not reflect the most optimized implementations that would be used in production game engines. Feel free to share your thoughts in the comments below about how to improve the design of the classes shown here.

Introduction

As you have learned in the previous lessons, compared to DirectX 11 or OpenGL, DirectX 12 introduces a few architectural changes that creates some challenges for the graphics programmer. These architectural changes provide a lower-level rendering API but also require a lot of additional code to be written just to get anything to appear on screen. When I first started working with DirectX 12, I really struggled with issues such as memory management, descriptors, and resource state management. What’s the best memory management scheme to use to store resources? How do I make sure I have enough descriptors to render a frame?

In this lesson, I will introduce several classes that will greatly simplify the development of DirectX 12 applications. The first of these classes is the UploadBuffer. The UploadBuffer is a linear allocator that creates resources in an Upload Heap. The purpose of this class is to provide the ability to upload dynamic constant, vertex, and index buffer data (or any buffer data for that matter) to the GPU. The most common use-case for the UploadBuffer class is to upload uniform data to a ConstantBuffer used in a shader. Another typical use-case for the UploadBuffer is for particle effects. If the particles are simulated on the CPU, the computed particle attributes need to be uploaded to the GPU every frame. Instead of creating a new upload buffer every frame, the UploadBuffer is used to upload the particle data to the GPU. Another use-case for the UploadBuffer is rendering a User Interface (UI). If the UI is dynamic (for example if you want to show run-time performance profiling) then the UI needs to be generated every frame with the new output. For each of these use cases, it is ideal to create a large resource in an upload heap, map a CPU pointer to the underlying resource, and copy the required data (using a memcpy for example).

The next class that I will discuss is the DescriptorAllocator class that is used to allocate a number of CPU visible descriptors. CPU visible descriptors are used for Render Target Views (RTV) and Depth-Stencil Views (DSV). CPU visible descriptors are also used to create Constant Buffer Views (CBV), Shader Resource Views (SRV), Unordered Access Views (UAV), and creating Samplers but CBVs, SRVs, UAVs, and Samplers require a corresponding GPU visible descriptor before they can be used in a shader.

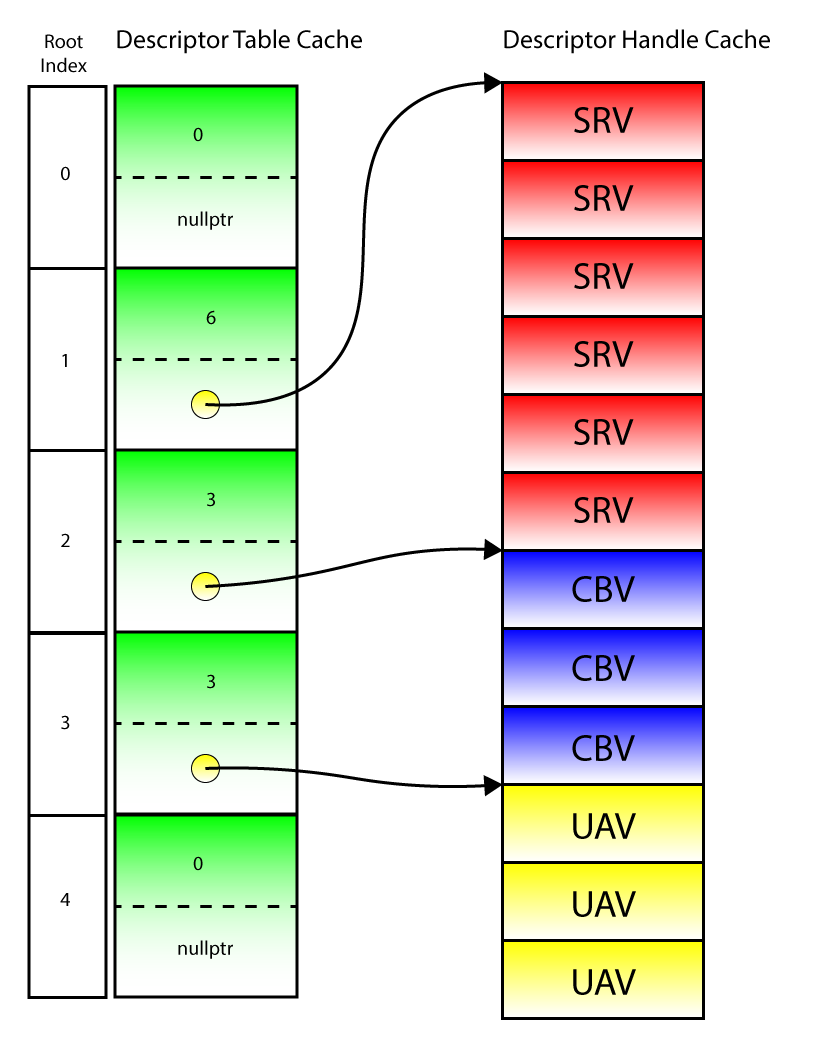

Whenever a Draw or Dispatch command is executed on a command list, any resource that is read from or written to in the shader needs to be bound to the graphics or compute pipeline using a GPU visible descriptor. Although buffer resources can be bound to the GPU using inline descriptors (see Lesson 2), texture resources cannot be bound using inline descriptors and must be bound to the GPU using a descriptor table. If the shader uses a lot of textures (this is the case if you are doing Physically Based Rendering for example), then all of the textures needed during the draw or dispatch call must be bound to the graphics, or compute pipelines at the same time. Usually all of the SRV’s for the textures are bound in a contiguous block of GPU visible descriptors in a single descriptor table range. But if textures are loaded in random order, or the same texture is being used for multiple draw calls then how can one ensure that all of the textures are bound in a contiguous block of GPU visible descriptors? Another issue is that only a single descriptor heap of the same type (CBV_SRV_UAV, or SAMPLER) can be bound on the command list at any moment. So all GPU visible descriptors must come from a single descriptor heap (the descriptor heaps can only be changed between Draw or Dispatch calls)! Yet another issue arises since descriptors cannot be reused until the command list that is using them has completed executing on the GPU. So how do you know how many GPU visible descriptors need to be allocated up-front? In all but the most simple case, it is impossible to know how many GPU visible descriptors will ever be needed for an entire frame (or 3 frames in the case of triple-buffering). The DynamicDescriptorHeap class described in this lesson solves the problem of ensuring that all of the GPU visible descriptors are copied to a single GPU visible descriptor heap before a Draw or Dispatch command is executed on the GPU.

Another tricky problem to solve in a DirectX 12 renderer is ensuring that resources are always in the correct state when they need to be. In order to perform a resource transition, both the before and after states of the resource need to be specified in the transition barrier. But if a resource is being used in different states in multiple command lists, then the graphics programmer needs to know exactly what state it was used in the previous command list that was executed. A naïve approach would be to create a class that stores both the resource and the current state of that resource. Anytime a transition barrier is performed on the resource, the current resource state is checked and used as the before state. This approach would work in a single-threaded renderer but wouldn’t work if the command lists are being built on different threads! In this case, there is no way to guarantee the state of the resource across multiple threads. The graphics programmer should only be concerned with implementing the graphics application and not concerned with synchronizing the state of a resource across multiple command lists, multiple command queues, and multiple threads! The ResourceStateTracker class introduced in this lesson strives to solve the problem of tracking the resource state in a multi-threaded renderer.

In order to bring everything together and make the life of a graphics programmer as easy as possible, a custom CommandList class is introduced which uses the aforementioned classes to simplify loading of texture and buffer resources, tracking resource state and minimizing transition barriers, and ensuring that all of the resources used in a shader are correctly bound to GPU visible descriptors. The goal of the custom CommandList class described in this lesson is to abstract all of the complications of using DirectX 12 away and reduce the game specific code from thousands of lines of (user) code to just a few hundred.

Upload Buffer

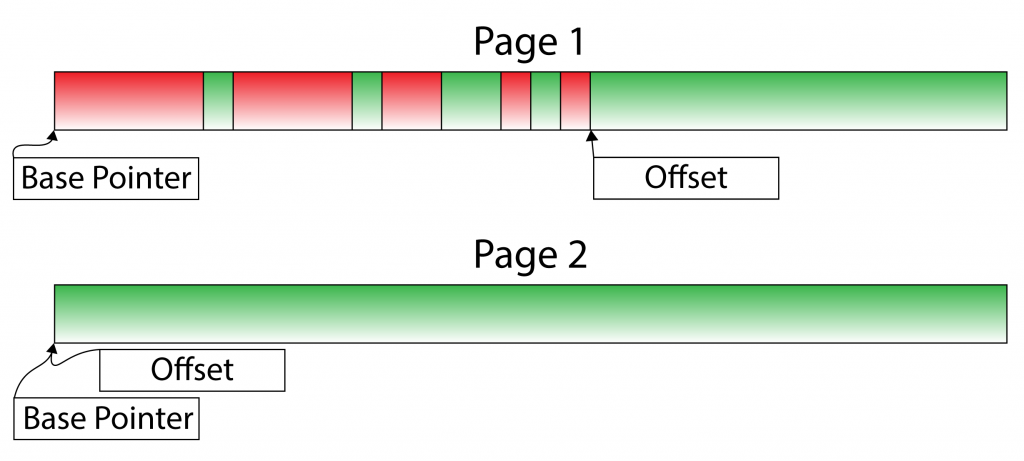

The UploadBuffer class provides a simple wrapper around a resource that is created in an upload heap. The UploadBuffer is implemented as a linear allocator that allocates chunks or blocks of memory from memory pages. If a memory page cannot satisfy an allocation request, a new page is created and added to a list of available pages. A linear allocator can’t grow indefinitely so when a page of memory is no longer in use (for example, the command list that uses an allocation from that page is finished executing on the GPU) then the page can be returned to the list of available pages in the heap. The image below shows an example of a linear allocator.

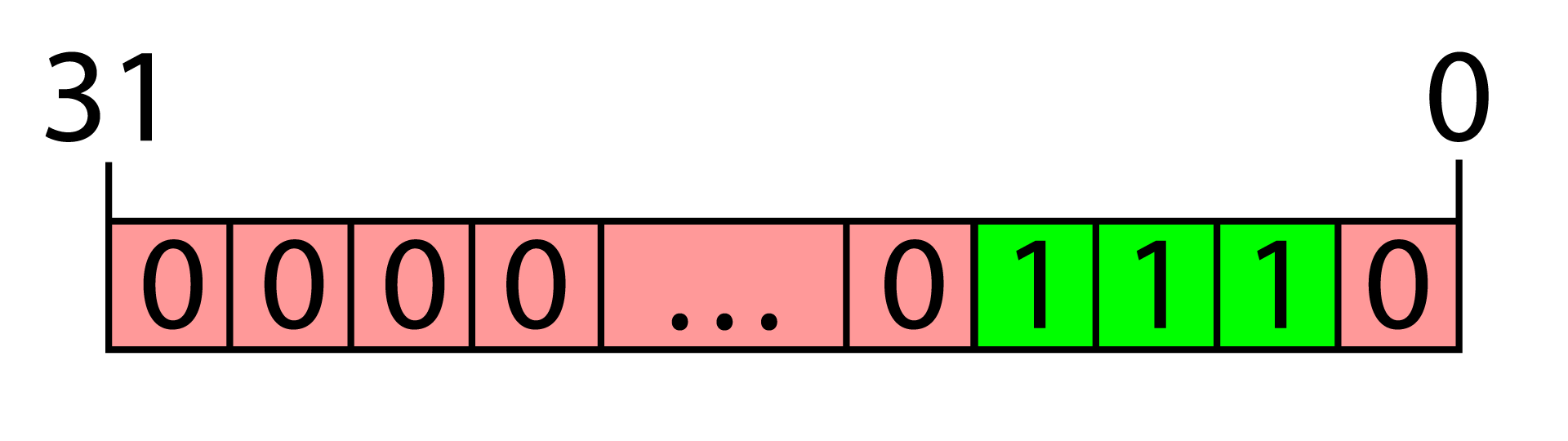

The image shows two pages from a linear allocator. The green blocks are free allocations while the red blocks are allocated. The green blocks in the first page before the offset pointer represent internal fragmentation created by aligned allocations.

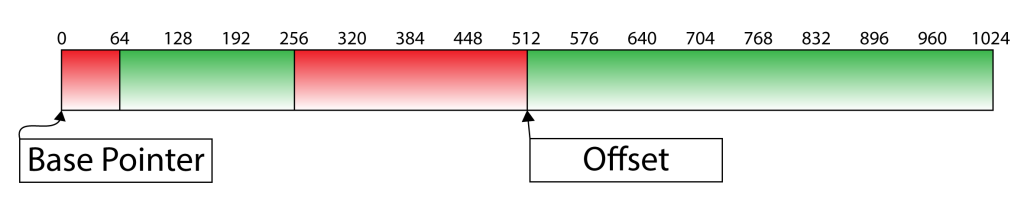

A linear allocator is probably the simplest allocator to implement since it only needs to store two pointers per memory page (the base pointer, and the current offset in the page). The above image shows an example of a linear allocator after several allocations have been made. The red blocks represent allocated blocks while the green blocks represent free blocks within the page. Allocated blocks are not freed or returned back to the memory page but once all of the allocations are no longer being used, then the entire page of memory can be returned to the available pages for the allocator and the offset pointer within the page is reset to the base pointer. The green chunks of free memory between the allocated blocks are a result of external fragmentation created by the alignment of allocated blocks. For example, if the first allocation is a block of 64 bytes and the next allocation needs to be aligned to 256-bytes (constant buffers are required to be aligned to 256-bytes) then there are 192 bytes of unused space in the memory page between the first and second allocations.

The example shows a memory page with two allocations. The first of 64 bytes and the second allocation is 256 byte aligned. This results in 192 bytes of external fragmentation between the two allocations.

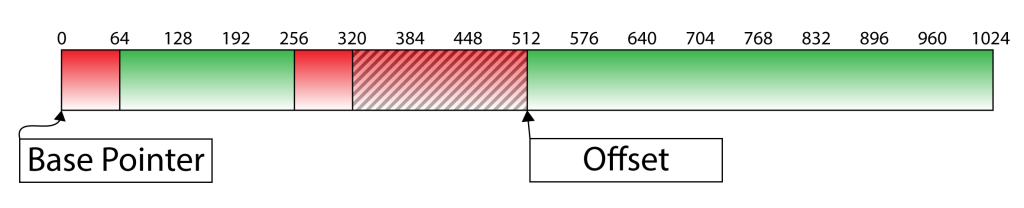

The linear allocator also suffers from internal fragmentation when a block of memory is requested but the size of the allocation is smaller than the requested alignment. For example, a block of 64 bytes of memory is 256-byte aligned (this is typical of a constant buffer that contains only a single 4×4 matrix). The allocation returns 256 bytes even if only 64 bytes will ever be used.

The following image shows the result of internal fragmentation caused by allocations that are smaller than their alignment. The shaded area in the second allocation is wasted since the allocation only required 64 bytes but 256 bytes are allocated because of the alignment requirements resulting in 192 bytes of internal fragmentation.

The shaded area in the second allocation shown in the image above is unused memory resulting in internal fragmentation since only 64 bytes was allocated but it required 256 byte alignment so 192 bytes remain unused.

Regardless of the internal and external fragmentation issues, the linear allocator is ideal due to its simplicity and speed. Allocating from the linear allocator only requires the offset pointer to be updated which can be performed in constant time (\(\mathcal{O}(1)\)).

UploadBuffer Class

As mentioned in the introduction, the UploadBuffer class is used to satisfy requests for memory that must be uploaded to the GPU. When the data in the upload buffer is no longer required, the memory pages can be reused. A page only becomes available again when the command list that is using memory from a page of memory in the upload buffer is finished executing on the GPU. In order to simplify the implementation of the UploadBuffer class, it is assumed that each UploadBuffer instance is associated to a single command list/allocator. In the first tutorial, you learned that a command allocator can’t be reset unless it is no longer “in-flight” on the command queue. Similar to the command allocator, the UploadBuffer is only reset when any memory allocations from the UploadBuffer are no longer “in-flight” on the command queue. This is shown later in this lesson when describing the custom CommandList class.

The implementation of this UploadBuffer class is inspired by the implementation of the LinearAllocator class in the MiniEngine provided with the DirectX-Graphics-Samples repository available on GitHub [1].

The UploadBuffer class provides the following functionality:

Allocate: Allocates a chunk of memory that can be used to upload data to the GPU.Reset: Release all allocations for reuse.

This provides a very simple interface definition for the UploadBuffer class.

The header file for the UploadBuffer class is shown next.

UploadBuffer Header

The UploadBuffer header file defines the public, and private members of the class. The preamble is shown first which defines the header file dependencies for the class.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

/** * An UploadBuffer provides a convenient method to upload resources to the GPU. */ #pragma once #include <Defines.h> #include <wrl.h> #include <d3d12.h> #include <memory> #include <deque> |

The Defines.h header file included on line 6 contains a few useful macro definitions. This file is local to the project but the contents are not shown here for brevity. The source code for this file is available on GitHub here: Defines.h

The wrl.h header file provides access to the ComPtr template class.

The d3d12.h header file contains the interfaces for the DirectX 12 API.

The memory header contains the std::shared_ptr which is used to track the lifetime of memory pages in the allocator. The deque header contains the std::deque container class which is used to store a pool of memory pages.

|

1 2 3 4 5 6 7 8 9 |

class UploadBuffer { public: // Use to upload data to the GPU struct Allocation { void* CPU; D3D12_GPU_VIRTUAL_ADDRESS GPU; }; |

The Allocation structure defined on line 18 is used to return an allocation from the UploadBuffer::Allocate method which is shown later.

|

1 2 3 4 |

/** * @param pageSize The size to use to allocate new pages in GPU memory. */ explicit UploadBuffer(size_t pageSize = _2MB); |

The UploadBuffer class declares only a single constructor which takes the size of a memory page as its only argument. The default size of a page of memory is 2MB. 2MB should be sufficient for most cases, depending on usage. The size of a memory page should be approximately large enough to contain all of the allocations for a single command list. If a lot of dynamic memory allocations are made in the command list, then it may be worthwhile to make larger pages. It is important to understand that the memory pages are never returned to the system. Once a page is allocated, it is never deallocated unless the UploadBuffer instance is destructed. The intention of the UploadBuffer is that it is reused each frame so the same allocations will likely be made the next frame, but the data will be different. If the pages are never freed, then the cost of creating the pages each frame can be avoided.

|

1 2 3 4 |

/** * The maximum size of an allocation is the size of a single page. */ size_t GetPageSize() const { return m_PageSize; } |

The GetPageSize method simply returns the size of a single page of the allocator. This can be used to check if an allocation can be satisfied by the UploadBuffer. If an allocation can’t be satisfied (if the page size is too small for example) then this might be an indication that the page size needs to be larger.

|

1 2 3 4 5 6 7 8 |

/** * Allocate memory in an Upload heap. * An allocation must not exceed the size of a page. * Use a memcpy or similar method to copy the * buffer data to CPU pointer in the Allocation structure returned from * this function. */ Allocation Allocate(size_t sizeInBytes, size_t alignment); |

The Allocate method allocates a chunk of memory with the specified allocation. The Allocation structure returned from this method is used to copy the CPU memory into the GPU virtual address space.

|

1 2 3 4 5 |

/** * Release all allocated pages. This should only be done when the command list * is finished executing on the CommandQueue. */ void Reset(); |

The Reset method is used to reset any allocations so that the memory can be reused for the next frame.

To keep track of the memory pages, an internal Page struct is defined. The Page struct stores a base CPU pointer, the offset within the page, and the ID3D12Resource that holds the GPU memory.

|

1 2 3 4 5 |

private: // A single page for the allocator. struct Page { Page(size_t sizeInBytes); |

The Page structure has only a single constructor which takes the size of the page as its only arguments. This is the same as the pageSize argument that is passed to the constructor of the UploadBuffer class.

|

1 2 3 |

// Check to see if the page has room to satisfy the requested // allocation. bool HasSpace(size_t sizeInBytes, size_t alignment ) const; |

The Page::HasSpace method is used to check if the page can satisfy the requested allocation. If the allocation cannot be satisfied by the current page, the current page is retired and a new page is created.

|

1 2 3 4 5 |

// Allocate memory from the page. // Throws std::bad_alloc if the the allocation size is larger // that the page size or the size of the allocation exceeds the // remaining space in the page. Allocation Allocate(size_t sizeInBytes, size_t alignment); |

The Page::Allocate method is used to perform the actual allocation with the memory page.

|

1 2 |

// Reset the page for reuse. void Reset(); |

The Page::Reset method is used to reset the page for reuse. This resets the offset within the page to 0.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

private: Microsoft::WRL::ComPtr<ID3D12Resource> m_d3d12Resource; // Base pointer. void* m_CPUPtr; D3D12_GPU_VIRTUAL_ADDRESS m_GPUPtr; // Allocated page size. size_t m_PageSize; // Current allocation offset in bytes. size_t m_Offset; }; |

The data that is private to the Page structure is the ID3D12Resource that contains the GPU memory for the page, the CPU and GPU base pointers, and the current offset within the page. The m_PageSize variable is also stored to make sure the requested allocation can be satisfied.

The UploadBuffer class needs to keep track of a pool of pages and provide a method to create new pages as required.

|

1 2 |

// A pool of memory pages. using PagePool = std::deque< std::shared_ptr<Page> >; |

The PagePool type alias defines a std::deque container that stores pointers to the memory pages.

|

1 2 3 |

// Request a page from the pool of available pages // or create a new page if there are no available pages. std::shared_ptr<Page> RequestPage(); |

The RequestPage private method is used to provide an available memory page if one is available. If there are no more available pages, a new one is created and added to the page pool.

|

1 2 |

PagePool m_PagePool; PagePool m_AvailablePages; |

The m_PagePool member variable is a PagePool used to hold all of the pages that have ever been created by the UploadBuffer class. The m_AvailablePages member variable on the other hand, is a pool of pages that are available for allocation.

|

1 2 3 4 5 6 |

std::shared_ptr<Page> m_CurrentPage; // The size of each page of memory. size_t m_PageSize; }; |

The m_CurrentPage member variable is used to store a pointer to the current memory page. The m_PageSize variable stores the size of a memory page. This is set to the pageSize constructor argument and is used for allocating new pages.

View the full source code for UploadBuffer.h

View the full source code for UploadBuffer.h

UploadBuffer Preamble

The preamble for the source file of the UploadBuffer class contains the header file dependencies that are specific to the implementation of the class.

|

1 2 3 4 5 6 7 8 9 10 |

#include <DX12LibPCH.h> #include <UploadBuffer.h> #include <Application.h> #include <Helpers.h> #include <d3dx12.h> #include <new> // for std::bad_alloc |

The DX12LibPCH.h header file is the precompiled header file for the DX12Lib project. All of the classes described in this article are part of the DX12Lib project.

The UploadBuffer.h is the header file that was just described in the previous section.

The Helpers.h header file contains some helper functions that are used by the UploadBuffer class. The source code for this file can be retrieved here: Helpers.h.

The d3dx12.h provides some helper functions and structs specific for DirectX 12. This file is hosted on GitHub and not distributed with the Windows 10 SDK. It is good practice to check GitHub if there is a new version of this file and always use the latest version in your own projects.

The new header contains the std::bad_alloc exception class which is thrown if an allocation larger than the size of a page is requested.

UploadBuffer::UploadBuffer

The UploadBuffer class provides a single parameterized constructor. The constructor takes the size of a memory page as its only argument.

|

1 2 3 |

UploadBuffer::UploadBuffer(size_t pageSize) : m_PageSize(pageSize) {} |

Besides setting the m_PageSize member variable, the constructor does nothing. Memory pages will only be allocated if an allocation is requested. The UploadBuffer class is intended to be used as an internal class for the custom CommandList class (that is shown later in the lesson). If dynamic allocations are not required by the command list, then no pages will be allocated. This is a typical example of Lazy Initialization.

UploadBuffer::Allocate

The Allocate method is used to allocate a chunk (or block) of memory from a memory page. This method returns an UploadBuffer::Allocation struct that was defined in the header file.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

UploadBuffer::Allocation UploadBuffer::Allocate(size_t sizeInBytes, size_t alignment) { if (sizeInBytes > m_PageSize) { throw std::bad_alloc(); } // If there is no current page, or the requested allocation exceeds the // remaining space in the current page, request a new page. if (!m_CurrentPage || !m_CurrentPage->HasSpace(sizeInBytes, alignment)) { m_CurrentPage = RequestPage(); } return m_CurrentPage->Allocate(sizeInBytes, alignment); } |

The Allocate method takes two arguments:

size_t sizeInBytes: The size of the allocation in bytes.size_t alignment: The memory alignment of the allocation in bytes. For example, allocations for constant buffers must be aligned to 256 bytes.

If the size of the allocation exceeds the size of a memory page, the method throws a std::bad_alloc exception.

If there is either no memory page (this is the case when the UploadBuffer is first created) or the current page cannot satisfy the request, a new page is requested.

On line 33, the actual allocation is made from the current memory page and the resulting allocation is returned to the caller.

UploadBuffer::RequestPage

If either the allocator does not have a page to make an allocation from, or the current page does not have the available space to satisfy the allocation request, a new page must be retrieved from the list of available pages or a new page must be created. The RequestPage method will return a memory page that can be used to satisfy allocation requests.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

std::shared_ptr<UploadBuffer::Page> UploadBuffer::RequestPage() { std::shared_ptr<Page> page; if (!m_AvailablePages.empty()) { page = m_AvailablePages.front(); m_AvailablePages.pop_front(); } else { page = std::make_shared<Page>(m_PageSize); m_PagePool.push_back(page); } return page; } |

If there are pages available in the m_AvailablePages queue, the the Page at the front of the queue is retrieved an popped off the queue.

If there are no available pages, then a new page is created and pushed to the back the m_PagePool queue. The m_PagePool queue stores all of the pages created by the allocator. In this case, the page is not added to the m_AvailablePages queue because it is going to be used to satisfy the allocation request. When the UploadBuffer is reset, the m_PagePool queue is used reset the m_AvailablePages queue (which is shown later when the Reset function is described).

UploadBuffer::Reset

The Reset method is used to reset all of the memory allocations so that they can be reused for the next frame (or more specifically, the next command list recording).

|

1 2 3 4 5 6 7 8 9 10 11 12 |

void UploadBuffer::Reset() { m_CurrentPage = nullptr; // Reset all available pages. m_AvailablePages = m_PagePool; for ( auto page : m_AvailablePages ) { // Reset the page for new allocations. page->Reset(); } } |

The Reset method makes all of the pages available again by copying the m_PagePool to the m_AvailablePages queue.

On line 60, the available pages are reset to prepare them for new allocations.

UploadBuffer::Page::Page

The constructor for a Page takes the size of the page as its only argument.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

UploadBuffer::Page::Page(size_t sizeInBytes) : m_PageSize(sizeInBytes) , m_Offset(0) , m_CPUPtr(nullptr) , m_GPUPtr(D3D12_GPU_VIRTUAL_ADDRESS(0)) { auto device = Application::Get().GetDevice(); ThrowIfFailed(device->CreateCommittedResource( &CD3DX12_HEAP_PROPERTIES(D3D12_HEAP_TYPE_UPLOAD), D3D12_HEAP_FLAG_NONE, &CD3DX12_RESOURCE_DESC::Buffer(m_PageSize), D3D12_RESOURCE_STATE_GENERIC_READ, nullptr, IID_PPV_ARGS(&m_d3d12Resource) )); m_GPUPtr = m_d3d12Resource->GetGPUVirtualAddress(); m_d3d12Resource->Map(0, nullptr, &m_CPUPtr); } |

The Page constructor also creates the ID3D12Resource as a committed resource in an upload heap. The creation of committed resource is described in Lesson 2 and for brevity is not described again here.

After the resource is created, the GPU and CPU addresses are retrieved using the ID3D12Resource::GetGPUVirtualAddress and ID3D12Resource::Map methods respectively. As long as the resource is created in an upload heap, it is safe to leave the resource mapped until the resource is no longer needed.

UploadBuffer::Page::~Page

The destructor for the Page struct unmaps the resource memory using the ID3D12Resource::Unmap method and resets the CPU and GPU pointers to 0. Since the m_d3d12Resource is stored using a ComPtr there is no need to explicitly release it since it will be automatically released after the Page is destructed.

|

1 2 3 4 5 6 |

UploadBuffer::Page::~Page() { m_d3d12Resource->Unmap(0, nullptr); m_CPUPtr = nullptr; m_GPUPtr = D3D12_GPU_VIRTUAL_ADDRESS(0); } |

Before allocating memory from a Page, the Page must have enough space to satisfy the allocation request. The Page::HasSpace method is used to check if the page can satisfy the requested allocation.

UploadBuffer::Page::HasSpace

The Page::HasSpace method checks to see if the page can satisfy the requested allocation. This method returns true if the allocation can be satisfied, or false if the allocation cannot be satisfied.

|

1 2 3 4 5 6 7 |

bool UploadBuffer::Page::HasSpace(size_t sizeInBytes, size_t alignment ) const { size_t alignedSize = Math::AlignUp(sizeInBytes, alignment); size_t alignedOffset = Math::AlignUp(m_Offset, alignment); return alignedOffset + alignedSize <= m_PageSize; } |

The HasSpace method must take the alignment into consideration. If the requested aligned allocation can be satisfied, this method returns true.

UploadBuffer::Page::Allocate

The Page::Allocate method is where the actual allocation occurs. This method returns an Allocation structure that can be used to directly copy (using memcpy for example) CPU data to the GPU and bind that GPU address to the pipeline.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

UploadBuffer::Allocation UploadBuffer::Page::Allocate(size_t sizeInBytes, size_t alignment) { if (!HasSpace(sizeInBytes, alignment)) { // Can't allocate space from page. throw std::bad_alloc(); } size_t alignedSize = Math::AlignUp(sizeInBytes, alignment); m_Offset = Math::AlignUp(m_Offset, alignment); Allocation allocation; allocation.CPU = static_cast<uint8_t*>(m_CPUPtr) + m_Offset; allocation.GPU = m_GPUPtr + m_Offset; m_Offset += alignedSize; return allocation; } |

If the Page does not have enough space to satisfy the allocation request, this method will throw a std::bad_alloc exception.

Page::Allocate method shown here on lines 105 – 109. Feel free to remove this check in your own implementation. Both the size and the starting address of an allocation should be aligned to the requested alignment. In most cases the size of the allocation will already be aligned to the requested alignment (for example, when allocating memory for a vertex or index buffer) but to ensure correctness, the requested allocation size is explicitly aligned up to the requested alignment on line 111.

On line 112, the current offset within the page must also be aligned to the requested alignment.

On line 114 – 115 the aligned CPU and GPU addresses are written to the Allocation structure that is returned by this method.

On line 118, the page’s pointer offset is incremented by the aligned size of the allocation.

On line 120, the Allocation structure is returned to the caller.

Page::Allocate method is not thread safe! If you require thread safety for this method then you may want to insert a std::lock_guard before line 105 of this method. Since I do not use the same instance of an UploadBuffer class across multiple threads, I consider this to be unnecessary overhead (there is some cost associated with locking and unlocking mutexes that I do not want to pay for here). UploadBuffer::Page::Reset

The Page::Reset method simply resets the page’s pointer offset to 0 so that it can be used to make new allocations.

|

1 2 3 4 |

void UploadBuffer::Page::Reset() { m_Offset = 0; } |

This concludes the implementation of the UploadBuffer class. In the next section, the DescriptorAllocator class is described. As the name implies, the DescriptorAllocator class is used to allocate (CPU visible) descriptors. CPU visible descriptors are used to create views for resources (for example Render Target Views (RTV), Depth-Stencil Views (DSV), Constant Buffer Views (CBV), Shader Resource Views (SRV), Unordered Access Views (UAV), and Samplers). Before a CBV, SRV, UAV, or Sampler can be used in a shader, it must be copied to a GPU visible descriptor. The DynamicDescriptorHeap class handles copying of CPU visible descriptors to GPU visible descriptor heaps. The DynamicDescriptorHeap class is the subject of the next following sections.

View the full source code for UploadBuffer.cpp

View the full source code for UploadBuffer.cpp

Descriptor Allocator

The DescriptorAllocator class is used to allocate descriptors from a CPU visible descriptor heap. CPU visible descriptors are useful for “staging” resource descriptors in CPU memory and later copied to a GPU visible descriptor heap for use in a shader.

CPU visible descriptors are used for describing:

- Render Target Views (RTV)

- Depth-Stencil Views (DSV)

- Constant Buffer Views (CBV)

- Shader Resource Views (SRV)

- Unordered Access Views (UAV)

- Samplers

The DescriptorAllocator class is used to allocate descriptors to the application when loading new resources (like textures). In a typical game engine, resources may need to be loaded and unloaded from memory at sporadic moments while the player moves around the level. To support large dynamic worlds, it may be necessary to initially load some resources, unload them from memory, and reload different resources. The DescriptorAllocator manages all of the descriptors that are required to describe those resources. Descriptors that are no longer used (for example, when a resource is unloaded from memory) will be automatically returned back to the descriptor heap for reuse.

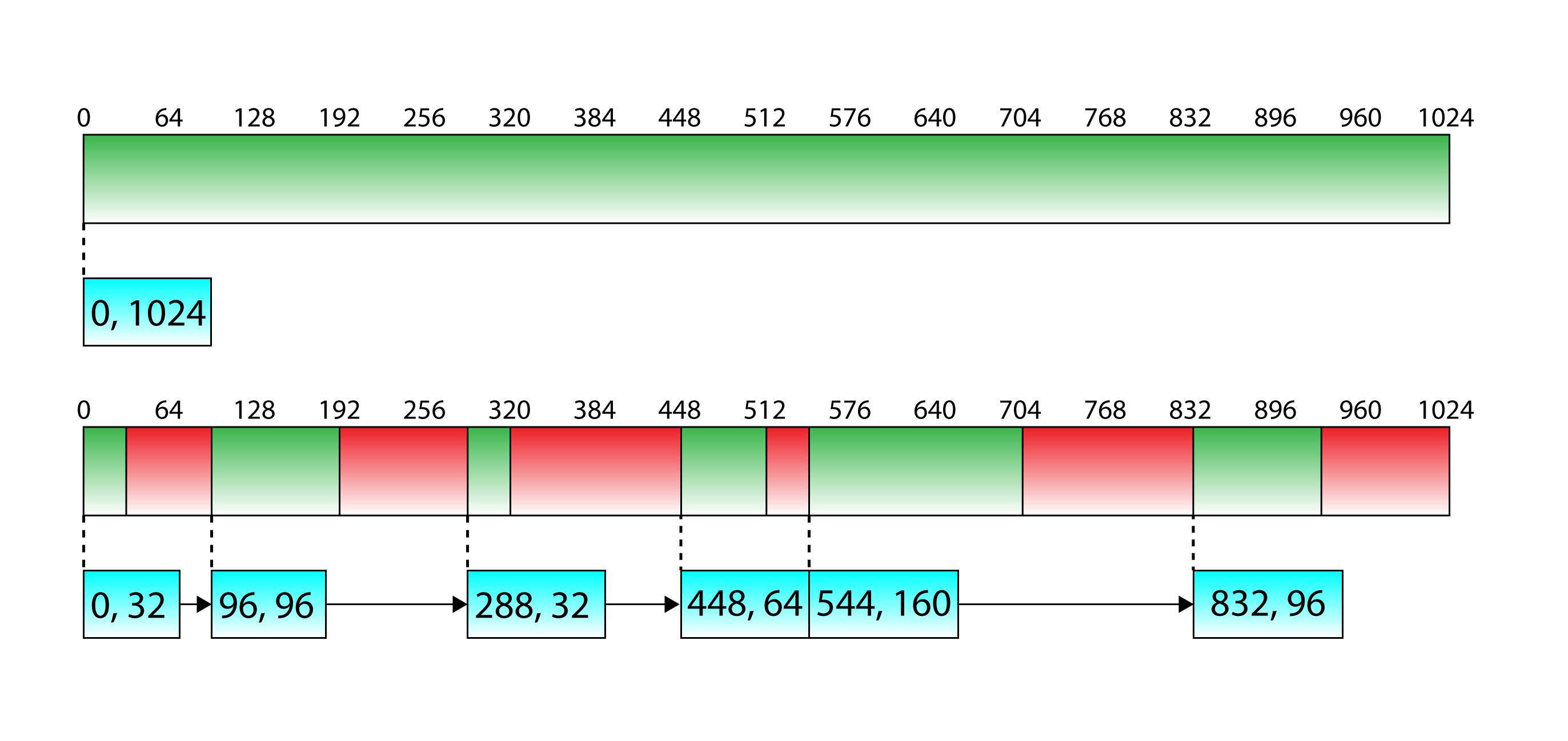

The DescriptorAllocator class uses a free list memory allocation scheme inspired by the Variable Sized Memory Allocations Manager by Diligent Graphics [2] to manage the descriptors. A free list keeps track of a list of available allocations. Each entry of the free list stores the available allocations from a page of memory. Each entry of the free list stores the offset from the beginning of the memory page and the size of the available allocation. In order to satisfy the allocation, the free list is searched for an entry that is large enough to satisfy the allocation request. If the allocation cannot be satisfied by the current page, a new page is created in memory.

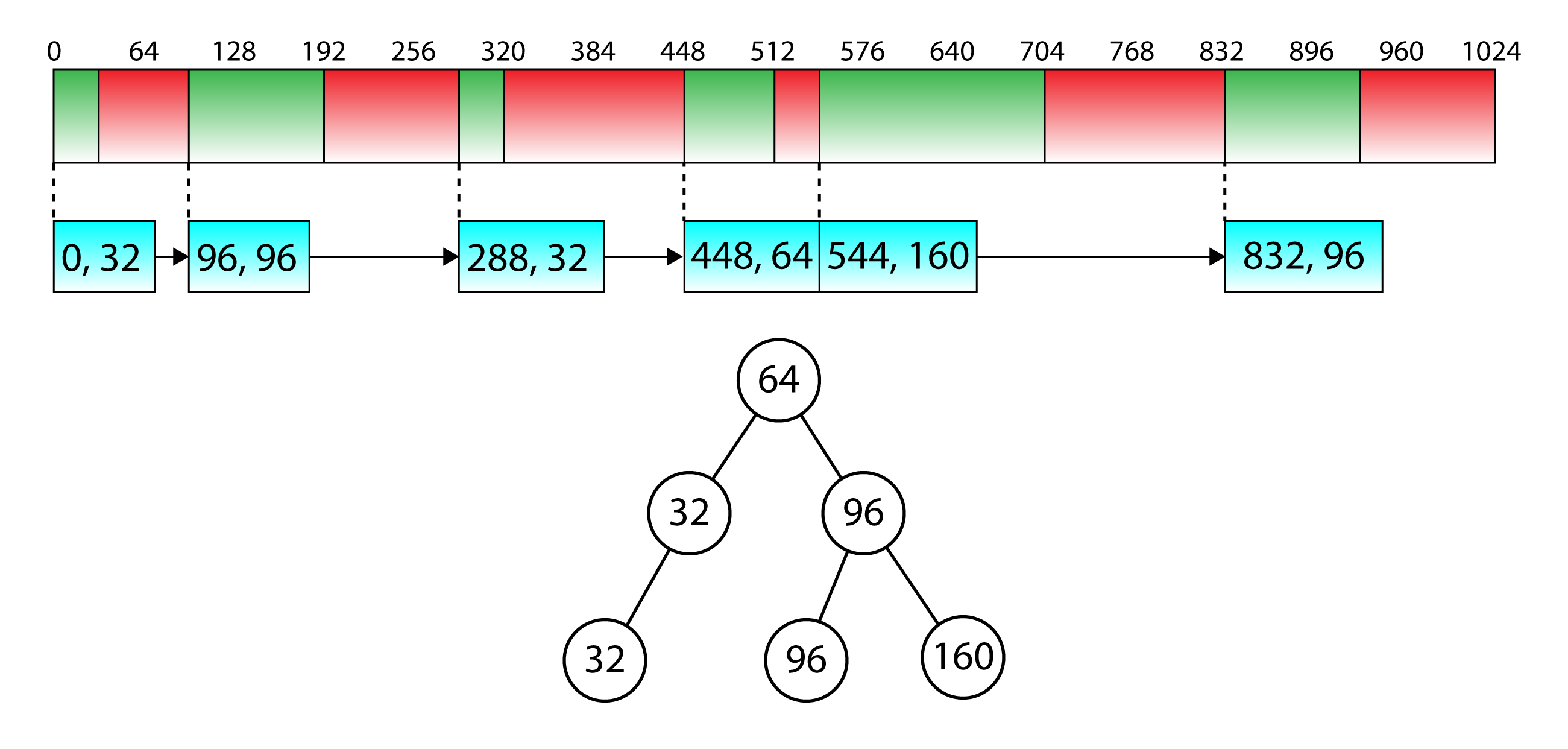

The image shows two pages of a free list allocator. The top image shows a memory page with no allocations. In this case, there is only a single entry in the free list which contains the entire page of memory. The bottom image shows the memory page after several allocations have been made.

The above image shows an example of pages of memory that are allocated using a free list allocation strategy. The top image shows the initial state of the page before any allocations are made. In this case, the free list contains only a single entry which refers to the entire memory page. The bottom image shows an example of a memory page after several allocations have been made. In this case, the free list contains several entries which represent the available blocks of memory in the page.

To make a new allocation from the page, all of the entries in the free list are searched and the first block that is large enough to satisfy the request is used. If there are no free blocks that can satisfy the request, then a new page is allocated.

This strategy for allocating memory is called first-fit (find the first free block that fits) and is the easiest strategy to implement since it only consists of a linear search through the free list but it is not the most efficient method to use for allocation. A linear search has \(\mathcal{O}(n)\) (worst case) complexity (where \(n\) is the number of entries in the free list).

A better technique would be to sort the free blocks by their size and perform a binary-search through the sizes to find a block that is large enough to satisfy the request. If you remember for your algorithm analysis class, a binary search has \(\mathcal{O}(log_2n)\) complexity (where \(n\) is the number of values to search) which is better than \(\mathcal{O}(n)\).

The image shows an example of a memory page after several allocations using a free list allocation strategy. The binary tree represents the entries of the free list sorted by size.

The above image shows a memory page after several allocations have been made. The binary tree in the bottom of the image represents the entries of the free list sorted by size. Using the binary tree, an allocation of 160 bytes can be satisfied by searching just three nodes. Using the linear list would require five entries to be searched before the allocation could be satisfied. With only six entries in the free list, this may not seem like a significant performance improvement, but with thousands (or millions) of entries, the performance improvement is significant.

Three different classes are used to implement this strategy:

DescriptorAllocator: This is the main interface to the application for requesting descriptors. TheDescriptorAllocatorclass manages the descriptor pages.DescriptorAllocatorPage: This class is a wrapper for aID3D12DescriptorHeap. TheDescriptorAllocatorPagealso keeps track of the free list for the page.DescriptorAllocation: This class wraps an allocation that is returned from theDescriptorAllocator::Allocatemethod. TheDescriptorAllocationclass also stores a pointer back to the page it came from and will automatically free itself if the descriptor(s) are no longer required.

The DescriptorAllocator class is described first.

DescriptorAllocator Class

The implementation of the DescriptorAllocator class is very similar to the UploadBuffer class shown in the previous section. The DescriptorAllocator class stores a pool of DescriptorAllocatorPages. If there are no pages that can satisfy a request, a new page is created and added to the pool. Similar to the UploadBuffer class, the DescriptorAllocator class has a very simple public interface and only provides a method to allocate descriptors.

DescriptorAllocator Header

The header file for the DescriptorAllocator class declares the public and private members of the class. The preamble for the header file is shown first which includes the dependencies for the class.

|

1 2 3 4 5 6 7 8 9 10 11 |

#include "DescriptorAllocation.h" #include "d3dx12.h" #include <cstdint> #include <mutex> #include <memory> #include <set> #include <vector> class DescriptorAllocatorPage; |

The DescriptorAllocator::Allocate method returns a DescriptorAllocation by value which requires the DescriptorAllocation.h header file to be included (on line 40) in this file.

The ubiquitous d3dx12.h header file included on line 42 is required for the DirectX 12 API and helper structures and functions.

The cstdint header file included on line 44 is required for the fixed-width integer types (uint32_t, and uint64_t).

The mutex header file is included for the std::mutex synchronization primitive. The mutex is used in the Allocate method to allow allocations to be safely made across multiple threads.

The memory header file is required for the std::shared_ptr pointer class. Shared pointers are used to track the lifetime of the pages. Each allocation also stores a shared pointer back to the page it came from.

The set header file includes the std::set container class. A set is used to store an ordered list of indices to the available pages in the page pool.

The vector header file includes the std::vector container class.

The DescriptorAllocatorPage class is used by the DescriptorAllocator class but the header file does not need to be included since the DescriptorAllocatorPage class is only used as a pointer within the DescriptorAllocator class. In this case, it is sufficient to provide a forward-declaration of the class (on line 50) without the need to include the header file.

The DescriptorAllocator class defines two public member functions:

DescriptorAllocator::Allocate: Allocates a number of contiguous descriptors from a CPU visible descriptor heap.DescriptorAllocator::ReleaseStaleDescriptors: Frees any stale descriptors that can be returned to the list of available descriptors for reuse. This method should only be called after any of the descriptors that were freed are no longer being referenced by the command queue.

The definition of these methods is shown later. The declaration of these methods is made in the header file for the DescriptorAllocator class.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

class DescriptorAllocator { public: DescriptorAllocator(D3D12_DESCRIPTOR_HEAP_TYPE type, uint32_t numDescriptorsPerHeap = 256); virtual ~DescriptorAllocator(); /** * Allocate a number of contiguous descriptors from a CPU visible descriptor heap. * * @param numDescriptors The number of contiguous descriptors to allocate. * Cannot be more than the number of descriptors per descriptor heap. */ DescriptorAllocation Allocate(uint32_t numDescriptors = 1); /** * When the frame has completed, the stale descriptors can be released. */ void ReleaseStaleDescriptors( uint64_t frameNumber ); |

The DescriptorAllocator constructor declared on line 55 takes two parameters. The first is the type of descriptors that the DescriptorAllocator will allocate. This can be one of the CBV_SRV_UAV, SAMPLER, RTV, or DSV types.

The second parameter to the constructor is the number of descriptors per descriptor heap. By default, descriptor heaps will be created with 256 descriptors. This value is arbitrary and only needs to be as large as the maximum number of contiguous descriptors that will ever be needed. If all of the descriptors in a descriptor heap have been exhausted, a new heap will be created to satisfy the allocation request.

The DescriptorAllocator::Allocate method allocates a number contiguous descriptors from a descriptor heap. By default, only a single descriptor is allocated. The numDescriptors argument can be specified if more than one descriptor is required. This method returns a DescriptorAllocation which is a wrapper for the allocated descriptor. The DescriptorAllocation class is described later.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

private: using DescriptorHeapPool = std::vector< std::shared_ptr<DescriptorAllocatorPage> >; // Create a new heap with a specific number of descriptors. std::shared_ptr<DescriptorAllocatorPage> CreateAllocatorPage(); D3D12_DESCRIPTOR_HEAP_TYPE m_HeapType; uint32_t m_NumDescriptorsPerHeap; DescriptorHeapPool m_HeapPool; // Indices of available heaps in the heap pool. std::set<size_t> m_AvailableHeaps; std::mutex m_AllocationMutex; }; |

The DescriptorHeapPool defined on line 72 is a type alias of a std::vector of DescriptorAllocatorPages.

The DescriptorAllocator::CreateAllocatorPage method declared on line 75 is an internal method that is used to create a new allocator page if there are no pages in the page pool that can satisfy the allocation request.

The m_HeapType variable stores the type of descriptors to allocate. This variable is also used to create new descriptor heaps.

The m_NumDescriptorsPerHeap variable stores the number of descriptors to create per descriptor heap.

The m_HeapPool is a std::vector of DescriptorAllocatorPages. This variable is used to keep track of all allocated pages.

The m_AvailableHeaps is a std::set of indices of available pages in the m_HeapPool vector. If all of the descriptors in a DescriptorAllocatorPage have been exhausted, then the index of that page in the m_HeapPool vector is removed from the m_AvailableHeaps set. This ensures that empty pages are skipped when looking for a DescriptorAllocatorPage that can satisfy the allocation request.

Since the DescriptorAllocator class is intended to be thread safe, a std::mutex is used to guard against multiple threads allocating or deallocating from the DescriptorAllocator at the same time.

In the next sections, the implementation of the DescriptorAllocator is shown.

View the full source code for DescriptorAllocator.h

View the full source code for DescriptorAllocator.h

DescriptorAllocator Preamble

Before defining the methods of the DescriptorAllocator class, a few header files used by the class need to be included.

|

1 2 3 4 |

#include <DX12LibPCH.h> #include <DescriptorAllocator.h> #include <DescriptorAllocatorPage.h> |

The DX12LibPCH.h is the precompiled header file for the DX12Lib project.

The DescriptorAllocator.h header file included on line 3 was just described in the previous section and the DescriptorAllocatorPage.h header file contains the declaration of the DescriptorAllocatorPage class (which will be shown later).

DescriptorAllocator::DescriptorAllocator

Similar to the constructor for the UploadBuffer class shown previously, the constructor for the DescriptorAllocator class does very little except initializing the class’s member variables.

|

1 2 3 4 5 |

DescriptorAllocator::DescriptorAllocator(D3D12_DESCRIPTOR_HEAP_TYPE type, uint32_t numDescriptorsPerHeap) : m_HeapType(type) , m_NumDescriptorsPerHeap(numDescriptorsPerHeap) { } |

The m_HeapType and m_NumDescriptorsPerHeap member variables are initialized based on the arguments passed to the constructor.

DescriptorAllocator::CreateAllocatorPage

The CreateAllocatorPage method is used to create a new page of descriptors. The DescriptorAllocatorPage class (which will be shown later) is a wrapper for the ID3D12DescriptorHeap and manages the actual descriptors.

|

1 2 3 4 5 6 7 8 9 |

std::shared_ptr<DescriptorAllocatorPage> DescriptorAllocator::CreateAllocatorPage() { auto newPage = std::make_shared<DescriptorAllocatorPage>( m_HeapType, m_NumDescriptorsPerHeap ); m_HeapPool.emplace_back( newPage ); m_AvailableHeaps.insert( m_HeapPool.size() - 1 ); return newPage; } |

The DescriptorAllocator::CreateAllocatorPage is very simple. On line 17 a new DescriptorAllocatorPage is created and added to the pool. On line 20, the index of the page in the pool is added to the m_AvailableHeaps set.

On line 22, the new page is returned to the calling function.

DescriptorAllocator::Allocate

The Allocate method allocates a contiguous block of descriptors from a descriptor heap. The method iterates through the available descriptor heap (pages) and tries to allocate the requested number of descriptors until a descriptor heap (page) is able to fulfill the requested allocation. If there are no descriptor heaps that can fulfill the request, then a new descriptor heap (page) is created that can fulfill the request.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 |

DescriptorAllocation DescriptorAllocator::Allocate(uint32_t numDescriptors) { std::lock_guard<std::mutex> lock( m_AllocationMutex ); DescriptorAllocation allocation; for ( auto iter = m_AvailableHeaps.begin(); iter != m_AvailableHeaps.end(); ++iter ) { auto allocatorPage = m_HeapPool[*iter]; allocation = allocatorPage->Allocate( numDescriptors ); if ( allocatorPage->NumFreeHandles() == 0 ) { iter = m_AvailableHeaps.erase( iter ); } // A valid allocation has been found. if ( !allocation.IsNull() ) { break; } } |

Before allocating any descriptors, the m_AllocationMutex mutex is locked to ensure the current thread has exclusive access to the allocator.

The result of the allocation is stored in the allocation variable defined on line 29.

On lines 31-47, the available descriptor heaps are iterated and on line 35 an allocation of the requested number of descriptors is made. If the allocator page was able to satisfy the requested number of descriptors, then a valid descriptor allocation is returned. If the allocation resulted in the allocator page becoming empty (the number of free descriptor handles reaches 0) then the index of the current page is removed from the set of available heaps (on line 39).

If a valid descriptor handle was allocated from the allocator page (the descriptor handle is not null) then the loop breaks on line 45.

If there were no available allocator pages (which is the case when the DescriptorAllocator is created) or none of the available allocator pages could satisfy the request, then a new allocator page is created.

|

1 2 3 4 5 6 7 8 9 10 11 |

// No available heap could satisfy the requested number of descriptors. if ( allocation.IsNull() ) { m_NumDescriptorsPerHeap = std::max( m_NumDescriptorsPerHeap, numDescriptors ); auto newPage = CreateAllocatorPage(); allocation = newPage->Allocate( numDescriptors ); } return allocation; } |

On line 50, the descriptor allocation is checked for validity. If it is still an invalid descriptor (a null descriptor) then a new descriptor page, that is at least as large as the number of requested descriptors, is created on line 53 using the DescriptorAllocator::CreateAllocatorPage method described earlier.

On line 55, the requested allocation is made (which should be guaranteed to succeed) and the resulting allocation is returned to the caller on line 58.

DescriptorAllocator::ReleaseStaleDescriptors

The last method of the DescriptorAllocator class is the ReleaseStaleDescriptors method. The ReleaseStaleDescriptors method iterates over all of the descriptor heap pages and calls the page’s ReleaseStaleDescriptors method. If, after releasing the stale descriptors, the page has free handles, it’s added to the list of available heaps.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

void DescriptorAllocator::ReleaseStaleDescriptors( uint64_t frameNumber ) { std::lock_guard<std::mutex> lock( m_AllocationMutex ); for ( size_t i = 0; i < m_HeapPool.size(); ++i ) { auto page = m_HeapPool[i]; page->ReleaseStaleDescriptors( frameNumber ); if ( page->NumFreeHandles() > 0 ) { m_AvailableHeaps.insert( i ); } } } |

In order to prevent modifications of the DescriptorAllocator in other threads, the m_AllocationMutex mutex is locked on line 63.

On lines 65-75, the pages of heap pool are iterated calling the page’s ReleaseStaleDescriptors method. The implementation of the DescriptorAllocatorPage::ReleaseStaleDescriptors method is shown in the following sections.

Pages that have free descriptor handles are added to the set of available heaps on line 73. It’s okay to add the same index to the set multiple times since the std::set is guaranteed to only store unique values.

View the full source code for DescriptorAllocator.cpp

View the full source code for DescriptorAllocator.cpp

DescriptorAllocatorPage Class

The purpose of the DescriptorAllocatorPage class is to provide the free list allocator strategy for an ID3D12DescriptorHeap. The DescriptorAllocatorPage class is not intended to be used outside of the DescriptorAllocator class so the library end user doesn’t necessarily need to know the details of this class. Knowing the details of this class is more interesting to someone who is writing their own DirectX 12 library or to someone who wants to understand the implementation details provided by the DX12Lib project that has been created for the purpose of these tutorials. As previously mentioned, the implementation of this class is heavily inspired by Variable Size Memory Allocations Manager from Diligent Graphics [2].

The DescriptorAllocatorPage class must be able to satisfy descriptor allocation requests but it also needs to provide some functions to query the number of free handles and to check to see if it has sufficient space to satisfy a request. The DescriptorAllocatorPage provides the following (public) methods:

HasSpace: Check to see if theDescriptorAllocatorPagehas a contiguous block of descriptors that is large enough to satisfy a request.NumFreeHandles: Returns the number of available descriptor handles in the descriptor heap. Note that due to fragmentation of the free list, allocations that are less than or equal to the number of free handles could still fail.Allocate: Allocates a number of contiguous descriptors from the descriptor heap. If theDescriptorAllocatorPageis not able to satisfy the request, this function will return a nullDescriptorAllocationFree: Returns aDescriptorAllocationback to the heap. Since descriptors can’t be reused until the command list that is referencing them has finished executing on the command queue, the descriptors are not returned directly to the heap until the render frame has finished executing.ReleaseStaleDescriptors: Returns any free’d descriptors back to the descriptor heap for reuse.

DescriptorAllocatorPage Header

The declaration of the DescriptorAllocatorPage class is slightly more elaborate than the DescriptorAllocator class described in the previous section. The DescriptorAllocatorPage class is not only a wrapper for a ID3D12DescriptorHeap but also implements a free list allocator to manage the descriptors in the heap.

|

1 2 3 4 5 6 7 8 9 10 |

#include "DescriptorAllocation.h" #include <d3d12.h> #include <wrl.h> #include <map> #include <memory> #include <mutex> #include <queue> |

Since the DescriptorAllocatorPage::Allocate method (shown later) returns a DescriptorAllocation object by value, the header file for DescriptorAllocation class needs to be included on line 37 (a forward declaration is not sufficient).

The d3d12.h header file is required for the ID3D12DescriptorHeap.

The wrl.h header file included on line 41 is required for the ComPtr template class.

The map, memory, mutex, and queue headers are required for the STL types that are used by the DescriptorAllocatorPage class.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

class DescriptorAllocatorPage : public std::enable_shared_from_this<DescriptorAllocatorPage> { public: DescriptorAllocatorPage( D3D12_DESCRIPTOR_HEAP_TYPE type, uint32_t numDescriptors ); D3D12_DESCRIPTOR_HEAP_TYPE GetHeapType() const; /** * Check to see if this descriptor page has a contiguous block of descriptors * large enough to satisfy the request. */ bool HasSpace( uint32_t numDescriptors ) const; |

The DescriptorAllocatorPage class publically inherits from the std::enable_shared_from_this template class. The std::enable_shared_from_this template class provides the shared_from_this member function which enables the DescriptorAllocatorPage class to retrieve a std::shared_ptr from itself (which will be used in the DescriptorAllocatorPage::Allocate method shown later). This requires the DescriptorAllocatorPage class to be created from a shared pointer using either std::make_shared or std::shared_ptr<T>( new T(...) ). This requirement is acceptable in this case since the DescriptorAllocatorPage class should only be used by the DescriptorAllocator class. On line 17 of the DescriptorAllocator::CreateAllocatorPage method shown previously, the DescriptorAllocatorPage is created using the std::make_shared method.

The parameterized constructor for the DescriptorAllocatorPage class is declared on line 51. The constructor takes two arguments: the type of descriptor heap to create and the number of descriptors to allocate in the descriptor heap.

The GetHeapTypemethod declared on line 53 simply returns the descriptor heap type that was used to construct the DescriptorAllocatorPage.

The HasSpace method declared on line 59 is used to check if the DescriptorAllocatorPage has a contiguous block of descriptors in the descriptor heap that is large enough to satisfy a request. It is often more efficient to first check if an allocation request will succeed first before making an allocation request and then checking for failure.

|

1 2 3 4 5 6 7 8 9 10 11 |

/** * Get the number of available handles in the heap. */ uint32_t NumFreeHandles() const; /** * Allocate a number of descriptors from this descriptor heap. * If the allocation cannot be satisfied, then a NULL descriptor * is returned. */ DescriptorAllocation Allocate( uint32_t numDescriptors ); |

The NumFreeHandles method defined on line 64 checks how many descriptor handles the DescriptorAllocatorPage still has available. Due to fragmentation of the free list, an allocation request of a contiguous block of descriptors that is less than the total number of free handles could still fail. For example, the fragmented free list shown in the previous image has 544 free descriptors but the largest contiguous block is only 128 descriptors wide.

The Allocate method defined on line 71 is used to allocate a number of descriptors from the descriptor heap. If the allocation fails, this method returns a null descriptor. This method returns a DescriptorAllocation. To check if the descriptor is valid, the DescriptorAllocation::IsNull method is used. This method is shown later in the section about the DescriptorAllocation class.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

/** * Return a descriptor back to the heap. * @param frameNumber Stale descriptors are not freed directly, but put * on a stale allocations queue. Stale allocations are returned to the heap * using the DescriptorAllocatorPage::ReleaseStaleAllocations method. */ void Free( DescriptorAllocation&& descriptorHandle, uint64_t frameNumber ); /** * Returned the stale descriptors back to the descriptor heap. */ void ReleaseStaleDescriptors( uint64_t frameNumber ); |

The Free method declared on line 79 is used to free a DescriptorAllocation that was previously allocated using the DescriptorAllocatorPage::Allocate method. It is not required to call this method directly since the DescriptorAllocation class will automatically free itself back to the DescriptorAllocatorPage it came from if it is no longer in use. This method takes the DescriptorAllocation as an r-value reference which implies that the DescriptorAllocation is moved into the function leaving the original DescriptorAllocation invalid.

The ReleaseStaleDescriptors method defined on line 84 releases the stale descriptors back to the descriptor heap for reuse. This method take the completed frame number as its only argument. All of the descriptors that were released during that frame will be returned to the heap.

The DescriptorAllocatorPage defines a few additional methods that are internal to this class.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

protected: // Compute the offset of the descriptor handle from the start of the heap. uint32_t ComputeOffset( D3D12_CPU_DESCRIPTOR_HANDLE handle ); // Adds a new block to the free list. void AddNewBlock( uint32_t offset, uint32_t numDescriptors ); // Free a block of descriptors. // This will also merge free blocks in the free list to form larger blocks // that can be reused. void FreeBlock( uint32_t offset, uint32_t numDescriptors ); |

The ComputeOffset method computes the number of descriptors from the base descriptor to the specified descriptor handle. This method is used to determine where a descriptor needs to be placed back in heap when the descriptor is free’d.

The AddNewBlock method adds a block of descriptors to the free list. This method is used to initialize the free list (with a single block containing all descriptors), when splitting a block of descriptors during allocation, and for merging neighboring blocks when descriptors are free’d.

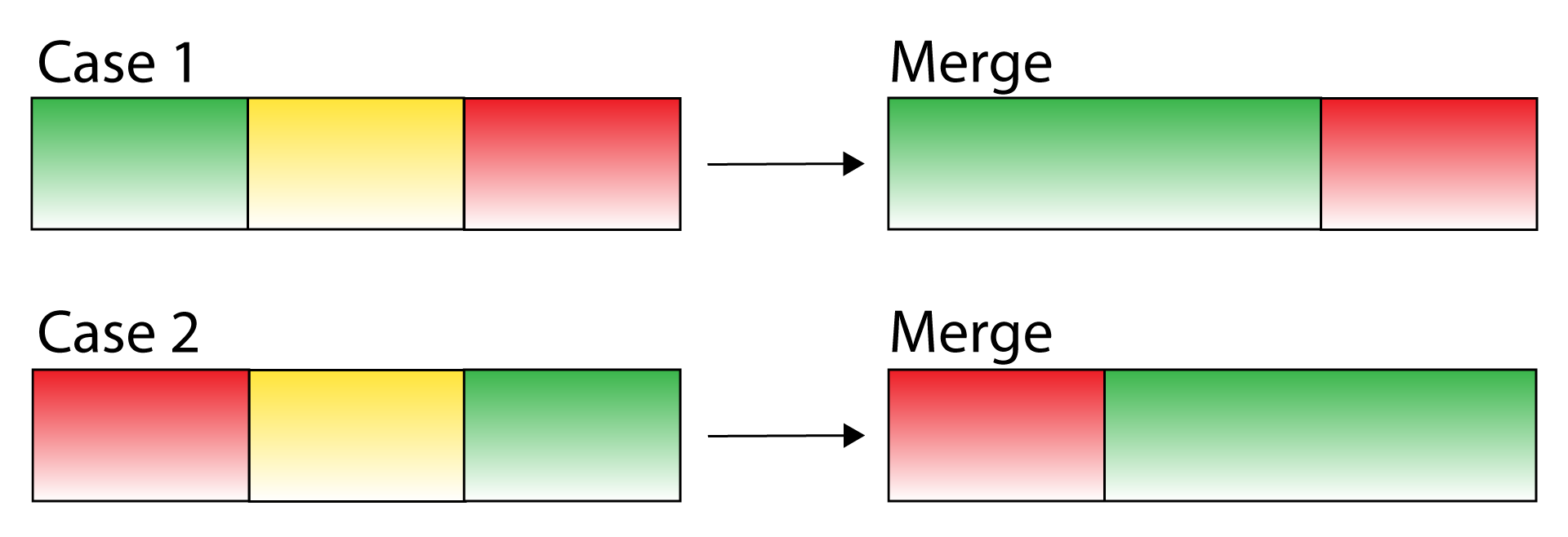

The FreeBlock method is used to free a block of descriptors. This method is used by the ReleaseStaleDescriptors method to commit the stale descriptors back to the descriptor heap. The FreeBlock method also checks if neighboring blocks in the free list can be merged. Merging free blocks in the free list reduces the fragmentation in the free list.

The DescriptorAllocatorPage class also defines some private data members.

|

1 2 3 4 5 |

private: // The offset (in descriptors) within the descriptor heap. using OffsetType = uint32_t; // The number of descriptors that are available. using SizeType = uint32_t; |

In order to improve code readability and reduce ambiguity, the OffsetType type alias is defined to refer to an offset (in descriptors) within the descriptor heap. The SizeType type alias is defined to refer to the number of descriptors in a block (in the free list).

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

struct FreeBlockInfo; // A map that lists the free blocks by the offset within the descriptor heap. using FreeListByOffset = std::map<OffsetType, FreeBlockInfo>; // A map that lists the free blocks by size. // Needs to be a multimap since multiple blocks can have the same size. using FreeListBySize = std::multimap<SizeType, FreeListByOffset::iterator>; struct FreeBlockInfo { FreeBlockInfo( SizeType size ) : Size( size ) {} SizeType Size; FreeListBySize::iterator FreeListBySizeIt; }; |

The FreeBlockInfo struct is forward declared on line 105 and defined on line 113. The forward declaration of the FreeBlockInfo struct is required to create the FreeListByOffset type alias on line 107. The FreeListByOffset type is an alias of a std::map which maps FreeBlockInfo to the offset of the free block within the free list.

The FreeListBySize type is an alias of a std::multimap that provides a mechanisim to quickly find the first block in the free list that can satisfy an allocation request. The FreeListBySize type needs to be a std::multimap since there can be many blocks in the free list with the same size.

The FreeBlockInfo struct simply stores the size of the block in the free list and a reference (iterator) to its entry in the FreeListBySize map. The FreeBlockInfo struct stores the iterator to its entry in the FreeListBySize map so that the entry can be quickly removed (without searching) when merging neighboring blocks in the free list.

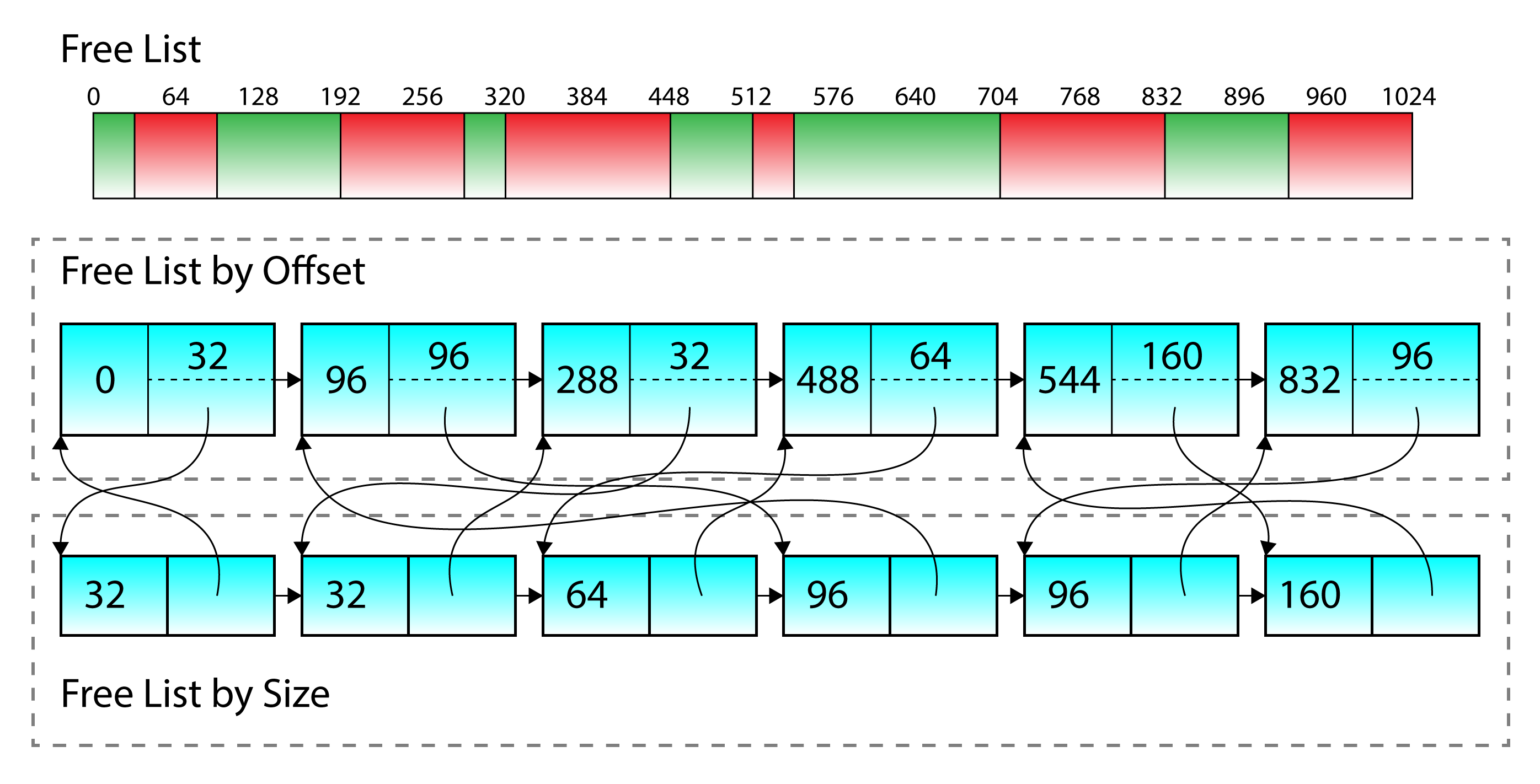

The image shows an example of a free list after several allocations have been made. The FreeListByOffset data structure (top) stores a reference to the corresponding entry in the FreeListBySize map (bottom). Similarly, the FreeListBySize map stores a pointer back to the corresponding entry in the FreeListByOffset map.

The image above shows an example of a free list after several allocations have been made. The FreeListByOffset data structure stores a reference to the corresponding entry in the FreeListBySize map. Similarly, each entry in the FreeListBySize map stores a reference by to the corresponding entry in the FreeListByOffset map. This solution resembles a bi-directional map (Bimap in Boost) which provides optimized searching on both offset and size of each entry in the free list.

The StaleDescriptorInfo struct is used to keep track of descriptors in the descriptor heap that have been freed but can’t be reused until the frame in which they were freed is finished executing on the GPU.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

struct StaleDescriptorInfo { StaleDescriptorInfo( OffsetType offset, SizeType size, uint64_t frame ) : Offset( offset ) , Size( size ) , FrameNumber( frame ) {} // The offset within the descriptor heap. OffsetType Offset; // The number of descriptors SizeType Size; // The frame number that the descriptor was freed. uint64_t FrameNumber; }; |

The StaleDescriptorInfo struct tracks the offset of the first descriptor and the number of descriptors in the descriptor range. The FrameNumber parameter stores the frame that the descriptors were freed.

|

1 2 3 4 5 6 7 |

// Stale descriptors are queued for release until the frame that they were freed // has completed. using StaleDescriptorQueue = std::queue<StaleDescriptorInfo>; FreeListByOffset m_FreeListByOffset; FreeListBySize m_FreeListBySize; StaleDescriptorQueue m_StaleDescriptors; |

The StaleDescriptorQueue is a type alias for a queue of StaleDescriptorInfos.

The m_FreeListByOffset, m_FreeListBySize, and m_StaleDescriptors member variables are the necessary data structures to track the state of the free list.

|

1 2 3 4 5 6 7 8 9 |

Microsoft::WRL::ComPtr<ID3D12DescriptorHeap> m_d3d12DescriptorHeap; D3D12_DESCRIPTOR_HEAP_TYPE m_HeapType; CD3DX12_CPU_DESCRIPTOR_HANDLE m_BaseDescriptor; uint32_t m_DescriptorHandleIncrementSize; uint32_t m_NumDescriptorsInHeap; uint32_t m_NumFreeHandles; std::mutex m_AllocationMutex; }; |

On line 147, the underlying ID3D12DescriptorHeap interface is defined.

The m_HeapType variable defines the type of descriptor heap used by the DescriptorAllocatorPage class.

Since the increment size of a descriptor within a descriptor heap is vendor specific, it must be queried at runtime (see Tutorial 1 for more information on descriptor heaps). The descriptor increment size is stored in the m_DescriptorHandleIncrementSize member variable.

The total number of descriptors in the descriptor heap is saved in the m_NumDescriptorsInHeap member variable and the total number of remaining descriptors in the heap is stored in the m_NumFreeHandles member variable.

The m_AllocationMutex defined on line 154 is used to ensure safe access allocations and deallocations across multiple threads.

View the full source code for DescriptorAllocatorPage.h

View the full source code for DescriptorAllocatorPage.h

DescriptorAllocatorPage Preamble

The DescriptorAllocatorPage class requires a few additional headers in order to compile.

|

1 2 3 4 |

#include <DX12LibPCH.h> #include <DescriptorAllocatorPage.h> #include <Application.h> |

The DX12LibPCH.h provides a precompiled header file for the DX12Lib project.

The DescriptorAllocatorPage.h header file is described in the previous section.

The Application.h header file provides access to the Application class. The Application class was briefly described in Tutorial 2. The Application class is used to get access to the ID3D12Device object.

DescriptorAllocatorPage::DescriptorAllocatorPage

The parameratized constructor for the DescriptorAllocatorPage class takes the heap type and the number of descriptors to allocate in the heap as arguments.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

DescriptorAllocatorPage::DescriptorAllocatorPage( D3D12_DESCRIPTOR_HEAP_TYPE type, uint32_t numDescriptors ) : m_HeapType( type ) , m_NumDescriptorsInHeap( numDescriptors ) { auto device = Application::Get().GetDevice(); D3D12_DESCRIPTOR_HEAP_DESC heapDesc = {}; heapDesc.Type = m_HeapType; heapDesc.NumDescriptors = m_NumDescriptorsInHeap; ThrowIfFailed( device->CreateDescriptorHeap( &heapDesc, IID_PPV_ARGS( &m_d3d12DescriptorHeap ) ) ); m_BaseDescriptor = m_d3d12DescriptorHeap->GetCPUDescriptorHandleForHeapStart(); m_DescriptorHandleIncrementSize = device->GetDescriptorHandleIncrementSize( m_HeapType ); m_NumFreeHandles = m_NumDescriptorsInHeap; // Initialize the free lists AddNewBlock( 0, m_NumFreeHandles ); } |

On line 10, a pointer to the ID3D12Device is retrieved from the Application class.

Before creating the ID3D12DescriptorHeap object, it must be described. The D3D12_DESCRIPTOR_HEAP_DESC is used to describe the ID3D12DescriptorHeap and has the following members [3]:

D3D12_DESCRIPTOR_HEAP_TYPE Type: Specifies the types of descriptors in the heap.UINT NumDescriptors: The number of descriptors in the heap.D3D12_DESCRIPTOR_HEAP_FLAGS Flags: A combination ofD3D12_DESCRIPTOR_HEAP_FLAGSvalues that are combined by using a bitwise OR operation. The following flags are currently available:D3D12_DESCRIPTOR_HEAP_FLAG_NONE: Indicates default usage of a heap.D3D12_DESCRIPTOR_HEAP_FLAG_SHADER_VISIBLE: This flag can optionally be set on a descriptor heap to indicate it is be bound on a command list for reference by shaders. Descriptor heaps created without this flag allow applications the option to stage descriptors in CPU memory before copying them to a shader visible descriptor heap, as a convenience. But it is also fine for applications to directly create descriptors into shader visible descriptor heaps with no requirement to stage anything on the CPU.

This flag only applies to CBV, SRV, UAV and samplers. It does not apply to other descriptor heap types since shaders do not directly reference the other types.

UINT NodeMask: For single-adapter operation, set this to zero. If there are multiple adapter nodes, set a bit to identify the node (one of the device’s physical adapters) to which the descriptor heap applies. Each bit in the mask corresponds to a single node. Only one bit must be set.

On line 16, the actual ID3D12DescriptorHeap is created using the ID3D12Device::CreateDescriptorHeap method.

On line 18, the m_BaseDescriptor member variable is initialized to the first descriptor handle in the heap and on line 19 the increment size of a descriptor in the descriptor heap is queried using the ID3D12Device::GetDescriptorHandleIncrementSize method. On line 20, the number of free handles in the DescriptorAllocatorPage is initialized to the number of handles in the ID3D12DescriptorHeap.

On line 23 a single block of descriptors is added to the free list using the AddNewBlock method. The new block has an offset of 0 and a size of m_NumFreeHandles.

DescriptorAllocatorPage::GetHeapType

The GetHeapType method is simply a getter method that returns the heap type.

|

1 2 3 4 |

D3D12_DESCRIPTOR_HEAP_TYPE DescriptorAllocatorPage::GetHeapType() const { return m_HeapType; } |

DescriptorAllocatorPage::NumFreeHandles

The NumFreeHandles method is simply a getter method that returns the number of free handles that are currently available in the heap.

|

1 2 3 4 |

uint32_t DescriptorAllocatorPage::NumFreeHandles() const { return m_NumFreeHandles; } |

DescriptorAllocatorPage::HasSpace

The HasSpace method is used to check if the DescriptorAllocatorPage has a free block of descriptors that is large enough to satisfy a request for a particular number of descriptors.

|

1 2 3 4 |

bool DescriptorAllocatorPage::HasSpace( uint32_t numDescriptors ) const { return m_FreeListBySize.lower_bound(numDescriptors) != m_FreeListBySize.end(); } |

The std::map::lower_bound method is used to find the first entry in the free list that is not less than (in other words: greater than or equal to) the requested number of descriptors. If no such element exists that is not less than numDescriptors, then the past-the-end iterator is returned which indicates that the free list cannot satisfy the requested number of descriptors. If the DescriptorAllocatorPage is not able to satisfy the request, then the DescriptorAllocator will create a new page (as was shown previously in the DescriptorAllocator::Allocate method).

DescriptorAllocatorPage::AddNewBlock

The AddNewBlock method adds a block to the free list. The block is added to both the FreeListByOffset map and the FreeListBySize map. Both lists are linked to create the bi-directional map for optimized lookups.

|

1 2 3 4 5 6 |

void DescriptorAllocatorPage::AddNewBlock( uint32_t offset, uint32_t numDescriptors ) { auto offsetIt = m_FreeListByOffset.emplace( offset, numDescriptors ); auto sizeIt = m_FreeListBySize.emplace( numDescriptors, offsetIt.first ); offsetIt.first->second.FreeListBySizeIt = sizeIt; } |

On line 43, the std::map::emplace method is used to emplace an element into the m_FreeListByOffset map. This method returns a std::pair where the first element is an iterator to the inserted element. The iterator to the inserted element is used to add an entry to the m_FreeListBySize multimap on line 44.

On line 45, the FreeBlockInfo‘s FreeListBySizeIt member variable needs to be patched to point to the corresponding iterator in the m_FreeListBySize multimap.

DescriptorAllocatorPage::Allocate

The Allocate method is used to allocate descriptors from the free list. When a block of descriptors is allocated from the free list, it is possible that the existing free block needs to be split and the remaining descriptors are “returned” to the free list. For example, if only a single descriptor is requested by the caller and the free list has a free block of 100 descriptors, then the free block of 100 descriptors is removed from the heap, 1 descriptor allocated from that block, and a free block of 99 descriptors is added back to the free list.

|

1 2 3 |

DescriptorAllocation DescriptorAllocatorPage::Allocate( uint32_t numDescriptors ) { std::lock_guard<std::mutex> lock( m_AllocationMutex ); |

In order to prevent any race conditions that may occur by multiple threads making allocations on the same DescriptorAllocatorPage, the m_AllocationMutex is locked line 50.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

// There are less than the requested number of descriptors left in the heap. // Return a NULL descriptor and try another heap. if ( numDescriptors > m_NumFreeHandles ) { return DescriptorAllocation(); } // Get the first block that is large enough to satisfy the request. auto smallestBlockIt = m_FreeListBySize.lower_bound( numDescriptors ); if ( smallestBlockIt == m_FreeListBySize.end() ) { // There was no free block that could satisfy the request. return DescriptorAllocation(); } |

On lines 54 and 61 the free list is checked to make sure that there are enough free descriptor handles to satisfy the request. If there are not enough descriptor handles, a default (null) DescriptorAllocation is returned to the calling function. If these checks pass, then smallestBlockIt contains an iterator to the first entry in the FreeListBySize multimap that is not less than the requested number of descriptors.

|

1 2 3 4 5 6 7 8 |

// The size of the smallest block that satisfies the request. auto blockSize = smallestBlockIt->first; // The pointer to the same entry in the FreeListByOffset map. auto offsetIt = smallestBlockIt->second; // The offset in the descriptor heap. auto offset = offsetIt->first; |

The smallestBlockIt is used to retrieve the size of the free block and get the iterator to the corresponding entry in the FreeListByOffset map in \(\mathcal{O}(1)\) constant time (which is better than \(\mathcal{O}(\log_2{n})\) logarithmic time complexity of the std::map::find method).

The free block that was found needs to be removed from the free list and a new block that results from splitting the free block needs to be added back to the free list.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

// Remove the existing free block from the free list. m_FreeListBySize.erase( smallestBlockIt ); m_FreeListByOffset.erase( offsetIt ); // Compute the new free block that results from splitting this block. auto newOffset = offset + numDescriptors; auto newSize = blockSize - numDescriptors; if ( newSize > 0 ) { // If the allocation didn't exactly match the requested size, // return the left-over to the free list. AddNewBlock( newOffset, newSize ); } |

On lines 77-78 the free block that was found is removed from the free list.

On lines 81-82 the size and offset of the the new free block that resulted from splitting the current block is computed and if the size is not 0, the new block is added to the free list using the AddNewBlock method on line 88.

|

1 2 3 4 5 6 7 |

// Decrement free handles. m_NumFreeHandles -= numDescriptors; return DescriptorAllocation( CD3DX12_CPU_DESCRIPTOR_HANDLE( m_BaseDescriptor, offset, m_DescriptorHandleIncrementSize ), numDescriptors, m_DescriptorHandleIncrementSize, shared_from_this() ); } |

The total number of free handles is decremented by the number of requested descriptors on line 92 and the resulting DescriptorAllocation is returned to the calling function on line 94.

DescriptorAllocatorPage::ComputeOffset

The ComputeOffset method is used to compute the offset (in descriptor handles) from the base descriptor (first descriptor in the descriptor heap) to a given descriptor.

|

1 2 3 4 |

uint32_t DescriptorAllocatorPage::ComputeOffset( D3D12_CPU_DESCRIPTOR_HANDLE handle ) { return static_cast<uint32_t>( handle.ptr - m_BaseDescriptor.ptr ) / m_DescriptorHandleIncrementSize; } |

The ComputeOffset method is used by the Free method (shown next) in order to compute the offset of a descriptor in the descriptor heap. Since a D3D12_CPU_DESCRIPTOR_HANDLE is just a structure that contains a single SIZE_T member variable, computing the offset of a descriptor in a descriptor heap is a matter of simple arithmetic.

DescriptorAllocatorPage::Free

The Free method returns a block of descriptors back to the free list. Descriptors are not immediately returned to the free list but instead are added to a queue of stale descriptors. Descriptors are only returned to the free list once the frame they were freed in is finished executing on the GPU. This ensures that descriptors are not reused until they are no longer being referenced by a GPU command.

|

1 2 3 4 5 6 7 8 9 10 |

void DescriptorAllocatorPage::Free( DescriptorAllocation&& descriptor, uint64_t frameNumber ) { // Compute the offset of the descriptor within the descriptor heap. auto offset = ComputeOffset( descriptor.GetDescriptorHandle() ); std::lock_guard<std::mutex> lock( m_AllocationMutex ); // Don't add the block directly to the free list until the frame has completed. m_StaleDescriptors.emplace( offset, descriptor.GetNumHandles(), frameNumber ); } |

The DescriptorAllocation doesn’t store the offset of the descriptor within the descriptor heap but the offset can be computed using the ComputeOffset method.